Mesquite MoCap – Open-Source Wearable 6-DoF Motion Capture

Open-source, wireless full-body 6-DoF motion capture using low-cost IMU nodes.

Research systems, installations, and experiments spanning embodied perception, computational media, and AI-driven tools.

Systems-focused work in embodied perception, sensor fusion, low-cost motion capture, and temporal modeling. These projects emphasize robust hardware-software pipelines, reproducible benchmarks, and deployable prototypes.

Open-source, wireless full-body 6-DoF motion capture using low-cost IMU nodes.

A flexible, low-cost smartphone rig for experimental multi-view 3D capture.

A long-running environmental station for logging and visualizing environmental change.

Artistic and computational-media projects that use AI, sensors, and visual media to explore perception, emotion, memory, and environment.

Four-space AI-driven installation about love, decay, and environmental change.

Week-long 4-camera time-lapse dataset + MotionGLB outputs for modeling rose wilting.

Single-photo -> rigged 3D character pipeline for rapid import into virtual environments.

A compact study using satellite imagery + Google Street View to examine how observational systems construct surveillance.

Smaller prototypes and course projects exploring deep learning, temporal models, and generative systems. These sketches inform larger research directions.

Experiments with Temporal Fusion Transformers on financial time-series data.

Experiments with SIREN-based implicit representations and diffusion models.

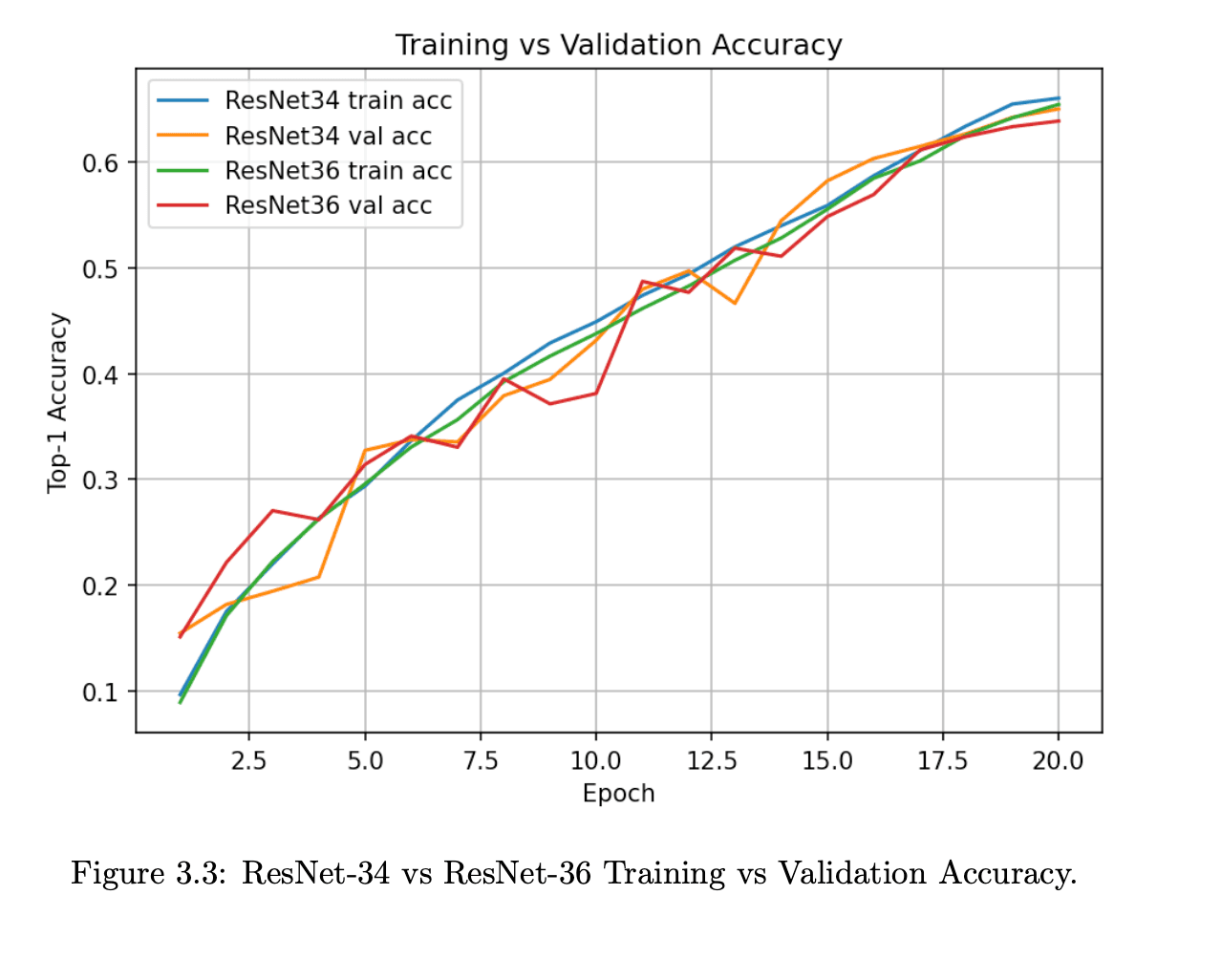

Course and lab experiments exploring modern vision backbones and representation learning.